January 8, 2016 | Microservices

Many of our clients are in the process of investigating or implementing ‘microservices’, and a popular question we often get asked is “what’s the most common mistake you see when moving towards a microservice architecture?”. We’ve seen plenty of good things with this architectural pattern, but we have also seen a few recurring issues and anti-patterns, which I’m keen to share here.

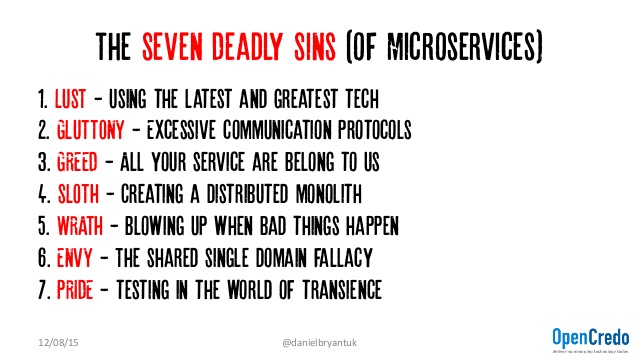

Last year Tareq Abedrabbo, CTO of OpenCredo, created a great talk (and blog post) entitled “The Seven Deadly Sins of Microservices“. Tareq and I had some fascinating discussion around the anti-patterns he identified, and this debate spread through the OpenCredo office. Ultimately this prompted me to create a second (redux) version of the talk that combines both ideas from Tareq’s and my experiences of helping our clients to implement microservice-based architectures.

I’ve been fortunate to be able to present this new talk at QCon New York, Devoxx UK and also the Docklands London Java Community. You can find the complete video recording of the LJC event on InfoQ.com, and here are the actual ‘seven deadly sins of microservices’:

I’m going to assume that you already know what microservices are, and so let’s dive into these seven anti-patterns and provide a bit more explanation:

Here at OpenCredo we regularly work with clients to deploy the latest and greatest technology, but we always talk with our clients about using the most appropriate technology for their goals, problem or use case. We are strong supporters of emerging technologies such as Docker, Kubernetes, and Terraform, and we know the value they can bring to an IT team. However, we strive to work with an organisation to help them create their strategy and drive the change needed to support the application of this innovative technology.

The core theme of this sin is to not believe that the latest and great technology will solve all of your problems. As Fred Brooks famously said, there are no silver bullets in IT, and anyone who has worked within IT for a few years will know instinctively that this is true.

In the talk I emphasise a strong understanding (and definition) of the problems you are attempting to solve, the investigation of options available to you, and the creation of documentation that clearly captures the reasons/requirements/results that led you to make the decisions you did. This applies to both the use of the microservices architecture style itself, and also the underlying/supporting technologies. Think about architecture, the skill of evaluation, and your business goals (are you looking for product/market fit or scaling?) Often it is ok to choose boring technology.

Wherever there is choice in technology we often get to see a large amount of variation within a large organisation, and with microservices this variation can often be found within the communication protocols. The resolution to this anti-pattern is to know your options – HTTP, ProtoBuffs, gRPC, Thrift, AMQP, MQTT etc – but attempt to standardise on the use of one synchronous and one asynchronous protocol. As with many things in IT, don’t gold plate, but know your options.

The ‘greed’ anti-pattern revolves around the discussion of the organisational, people and cultural impact that introducing a microservice architecture has. A key point I was keen to make is that this is often a bigger issues than most people appreciate! I’ve written more about this in an OpenCredo blog post “The Business Behind Microservices (Redux)”, and there is also an accompanying muCon microservice conference presentation video and slide deck at the bottom of this post.

As with any technology, it is all too easy to become lazy when implementing microservices, and this often leads to the taking of supposed ‘shortcuts’. At OpenCredo we have seen two types of failure mode repeated when implementing a microservice architecture. The first occurs when you can’t deploy services independently, and what you have is a distributed monolith. The second occurs when the same design principles and decisions that were used to create a monolith are applied to microservices, and this results in the creation of a series of “mini monoliths” or “microliths”. These microliths may be micro in nature, but they don’t embrace the single responsibility principle, or require complex deployment procedures

Following in Simon Brown’s footsteps, I often emphasise that architecture is a vital skill, and this includes skills around technical leadership, the promotion of shared understanding, and “just enough upfront design”. However, architecture is a function that we often see neglected within the software development industry.

The slide deck associated with this blog post contains several references and links to explore these ideas in more detail, but the core principle is to be mindful of your service’s responsibilities and APIs, and also pay attention to the points in the systems at which data is transformed (the “data fault lines”). And when I say ‘mindful’, I’m really alluding to the fact that your designs and contracts must be automatically verifiable and enforceable! Several people advise creating the interface schema first (“schema up-front design” as said by Michael Bryzek at QCon New York 2015), and others advocate schema evolution, perhaps using Consumer-based Contracts.

The vast majority of microservice-based applications are going to be running as distributed systems, and therefore we must respect this, both as developers and operators. As developers we need to code defensively, and utilise fault tolerant patterns, such as time-outs, retries, circuit-breakers and bulkheads. Michael Nygard’s excellent ‘Release It!‘ book should be mandatory reading, as should a visit to the Netflix OSS tooling Github repository! I’ve also put several links in the presentation to distributed systems articles I have found useful, such as “Distributed systems theory for the distributed systems engineer”

As operators or sysadmins, we need to embrace the ‘DevOps’ mentality of shared understanding and responsibility, and also spend an appropriate amount of time developing and working with tooling to support our role. As Martin Fowler has already stated, rapid provisioning, basic monitoring and rapid application deployment are microservice prerequisites. Adrian Cockcroft has also recently presented a talk with some excellent pointers as to the challenges with monitoring microservices and containers, which is definitely something I have struggled with in the past.

In addition to the technical challenges, there are also social issues to work with. In the presentation I talk about the fact that “failure happens all of time”, and share learnings from industry experts I have met through InfoQ and the QCon conference series. Core arguments I made were that pain (and success) must be shared throughout the team, from operations to development (“dev-on-call”), and because people are often the cause of and solution to the issues, real-life disaster recovery training must be regularly undertaken.

With a monolith the notion of developing a single shared domain model was often ‘best practice’, as this would be used throughout the code base. However, with microservices the Domain-Driven Design (DDD) principles of ‘bounded contexts’ have become popular, and these support us in properly modelling, defining and encapsulating our domains.

In the talk I also mention an often seen data/analytics-related challenge with organisations moving the microservices, which is the increased distribution of data. This can be a big challenge for the data science or analytics team – with a monolithic application they simply reached into the associated monolithic database and pulled out the data they wanted (often aggregated and formatted nicely via SQL). This issue can be compounded with the ease (and developer benefit) of introducing NoSQL solutions into the stack. Potential solutions are implementing tools/services to ‘pull’ data from the respective data stores into something like a data lake, or by ‘pushing’ data change events to interested services or data aggregators.

In my experience it is almost always more difficult to test microservices in comparison with testing a monolith. However, difficult does not mean impossible, and accordingly we need to create new tooling and processes to support testing in a fast-moving, volatile and transient world. In my talk I referenced the good work by Toby Clemson in sharing his ‘Testing Strategies in a Microservice’ architecture‘, but much work still needs to be done. Many organisations that have been creating microservices for some time are arguing that the only effective way to test microservices is in production, because the emergent behaviour of the system is impossible to predict in a synthetic staging environment.

I often use tooling such as Serenity BDD, Tom Akehurst’s excellent Wiremock and Saboteur, the Jenkins Performance Plugin, and Gatling.io or Flood.io.

As many people have already said, microservices can truly add a lot of flexibility to the development and deployment process, but there clearly are challenges. In this blog post, and the accompany talk on InfoQ, I have shared some of my learnings, and discussed my “Seven Deadly Sins of Microservices” from the past several years of working on the front-lines of microservice implementations.

Please do get in touch if you have any questions or would like any advice on microservices. You can find me on twitter @danielbryantuk, and via email at daniel.bryant@opencredo.com. We have a range of experience and expertise at OpenCredo, and regularly work on a range of projects, from integrating with your team with the goal of delivering specific leading-edge technical solutions, to implementing large-scale organisational transformation towards the practices of agile, lean and DevOps.

This blog is written exclusively by the OpenCredo team. We do not accept external contributions.