October 7, 2015 | Software Consultancy

It’s well known that predicting how long a project/task will take in IT is hard. In this post I’ll address one aspect of this (correlation) and ask what insights a data science perspective can give us about how correlations can make prediction difficult. I’ll explain the problems that correlation poses, give some practical advice for teams & project managers and investigate possible innovations to tooling that might improve matters.

WRITTEN BY

To best appreciate the problem with correlation we will indulge ourselves with a few illustrative examples.

A factory manager at Dodgy electronics (a firm that manufactures light bulbs). These have to be produced to certain safety specifications. She estimates that the risk of any given light bulb being faulty is 1 in 10,000. A faulty light bulb exposes the company to a risk of litigation so it’s important she can work out an upper bound on the number of faulty light bulbs being produced so she knows how much money to put aside to cover litigation. After a production run of 1,000,000 light bulbs she expects to see about 100 faulty light bulbs and budgets based on a maximum of 200 light bulbs proving faulty. However, in the event 10,000 light bulbs are faulty. The ensuing litigation puts Dodgy electronics out of business.

A new housing insurance company Naive Insurers calculates that the risk of flood damage to a property in a certain city is 0.3% per year. The owners of 1,000 homes in this city take out insurance policies with them. One of their analysts calculates that they can expect 3 houses to be flooded in any given year so he recommends they budget for 6. In the first 10 years no houses at all get flooded, but then in the 11th year 900 houses get flooded. The insurance company doesn’t have enough money to pay up and declares itself bankrupt.

A project manager has 20 tasks that need to be estimated. 5 of them involve work on database tables and the remaining 15 tasks involve creating a new UI screen. Janet estimated each of the former 5 tasks as taking half a day and Bob estimated the 15 remaining tasks (with varying estimates) and the total was 25 days. When it actually came to completing the tasks though the first 5 took a day in total and the remaining 15 tooks 45 days! The project is now substantially over budget.

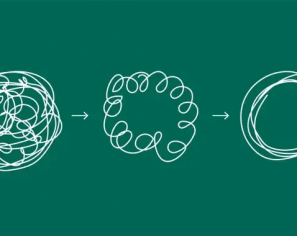

What’s going wrong! The problem is correlation. In each example the individual estimates may well be a reasonable given the time and available information. But moving from these estimates for individual items to a global prediction for their aggregate is not working. The problem is that the individual estimates are correlated with each other. Now for just a little mathematics:

Two random events are regarded as independent if the probability of one occurring does not depend in any way on the probability of the other occurring. A good example of this is throwing a coin twice. The outcome of the second throw (heads or tails) does not depend on the outcome of the first throw. By contrast if two random events are not independent then knowing the outcome of the first can tell something, sometimes a lot, about the outcome of the second random event. Each of the examples above showcases this (indeed more specifically they’re examples of correlation). To explain fully let’s revisit them and see how the presence of correlation causes the difficulty in prediction.

The factory produces light bulbs in batches of 10,000. For each batch a key piece of machinery can be mis configured with a likelihood of 1 part in 10,000. If it is misconfigured then every light bulb in that batch will be faulty. If it’s well configured then almost all light bulbs will pass the specification. One million light bulbs is 100 batches of 10,000. The chance of at least one of these batches failing is approximately 1% (low but not so low that you should ignore it when worrying about critical risks). But if a batch does fail then at least 10,000 light bulbs will fail the specifications. The error here was assuming that for a couple of light bulbs in the same batch that the probability of being faulty is independent of the other being faulty. In fact if you know one is faulty then you can be pretty sure the other one is too.

The houses were in the same city. When the river broke its banks most of them were at the same height above the river and so got flooded simultaneously. Again the problem was that the likelihood of one house being flooded was not independent on that of it’s neighbour being flooded.

Both of these examples are straight-forward with a very clear degree of correlations present. However, in the real world there are generally various correlations of varying degrees, many of which we may remain unaware, operating in the background.

Regarding the 15 tasks they all required testing. Because of the use of javascript on the page testing took longer than expected for all of the tasks. For the 5 tasks, probably no one notices because people don’t generally complain about things going well.

Regarding the amount of time it takes to complete a task it’s worth noting that the best that can happen is that a task takes essentially no time (a saving of 100% on any prediction) but there is no limit to how badly something can overrun. For mathematicians out there I offer this hypothesis: The errors in timings for tasks (and projects) are closer to log-normal than to normal.

Our last example illustrates how the curse of correlation is a problem for project management. Any team or individual estimating the amount of work that’ll get done in a sprint is estimating dozens of tasks with various degrees of correlation with each other. This may be due to tasks relying on developer or QA estimates from the same person (who will have their own systematic biases), tasks having similar technical content, tasks having similar dependencies/blockers or some other unknown common factor.

Because there is so much uncertainty with work estimates I advise giving error bars on all estimates you make as a team. Given the problems with building sprint level estimates up based on task level estimates I recommend tracking the deviation between your estimates and the actual time taken in order to come up with realistic error bars for your estimates. Here it would be nice to know the distribution of errors in the industry as a whole (I repeat my conjecture that the errors are log normally distributed) as this might help us make more accurate predictions.

Project managers and teams should be aware that when the sprint contains a lot of work of a similar sort (especially where this is somehow of a new type – a new technology, technique etc.) that the uncertainty in your estimate is that much higher. It’s natural to assume that although some sprints will be underestimated others will be overestimated and cancel each other out on average. However, these errors too may be correlated.

So, the main takeaway message here is that correlations gum up your predictions. The more similarity between the subtasks in your sprint, all else being equal, the more likely there are problematic correlations so the larger error bars you should quote.

I have one final point to make concerning tooling:

There are too many variables in predictions (the person making the prediction, the technologies involved, the module worked on etc.) for a team or a project manager to attempt to measure how these variables manifest in correlations and how they affect predictions at the level of sprints. However, the number of variables may be small enough that, if we can get hold of some good data, heuristic systems would be able to improve the reliability of predictions significantly. There is an opportunity to build heuristic systems to estimate work at the sprint level based on estimates for tasks, actual times for tasks and some metadata about those tasks. Large companies with lots of project management data and potential to gather more are best placed to take advantage of this (Atlassian I’m looking at you!).

This blog is written exclusively by the OpenCredo team. We do not accept external contributions.

Agile India 2022 – Systems Thinking for Happy Staff and Elated Customers

Watch Simon Copsey’s talk from the Agile India Conference on “Systems Thinking for Happy Staff and Elated Customers.”

Lean-Agile Delivery & Coaching Network and Digital Transformation Meetup

Watch Simon Copsey’s talk from the Lean-Agile Delivery & Coaching Network and Digital Transformation Meetup on “Seeing Clearly in Complexity” where he explores the Current…

When Your Product Teams Should Aim to be Inefficient – Part 2

Many businesses advocate for efficiency, but this is not always the right goal. In part one of this article, we explored how product teams can…