February 6, 2018 | Cloud

Among the many announcements made at Re:Invent 2017 was the release of AWS Privatelink for Customer and Partner services. We believe that the opportunity signalled by this modest announcement may have an impact far broader than first impressions suggest.

So let’s look at it in more depth.

Amazon Virtual Private Cloud (Amazon VPC) lets you provision a logically isolated section of the AWS Cloud where you can launch AWS resources in a virtual network that you define. You have complete control over your virtual networking environment, including selection of your own IP address range, creation of subnets, and configuration of route tables and network gateways.

https://aws.amazon.com/vpc/

VPC is a fundamental unit of isolation within AWS: a virtual network which allows you to create cloud resources and govern the interactions between them. All traffic within the VPC is private to the VPC.

VPC was announced on 25 August 2009, and all AWS architectures are now strongly guided towards adoption. However, many services predate VPC and/or utilise the original model of public internet access – they are not directly available from within a VPC. These include S3, SQS, SNS, DynamoDB and many more. This is less than ideal for several reasons.

AWS responded to this situation via the introduction of “VPC Endpoints” – initially for S3 and then DynamoDB. These provide a gateway within the VPC directly to the AWS service. Once configured, requests to the endpoint service will be automatically routed via the gateway (via a sdn-magical pl-* entry in the route table).

A VPC endpoint enables you to privately connect your VPC to supported AWS services and VPC endpoint services powered by PrivateLink without requiring an internet gateway, NAT device, VPN connection, or AWS Direct Connect connection. Instances in your VPC do not require public IP addresses to communicate with resources in the service. Traffic between your VPC and the other service does not leave the Amazon network.

https://docs.aws.amazon.com/AmazonVPC/latest/UserGuide/vpc-endpoints.html

So, no more public internet required. And for those worried about exfiltration you can attach IAM-style policies to the gateway controlling which principals and resources may interact and how – or more plainly stated which users/roles can access which S3 buckets.*

Then in November 2017, AWS announced PrivateLink a new generation of endpoints.

With traditional endpoints, it’s very much like connecting a virtual cable between your VPC and the AWS service. Connectivity to the AWS service does not require an Internet or NAT gateway, but the endpoint remains outside of your VPC. With PrivateLink, endpoints are instead created directly inside of your VPC, using Elastic Network Interfaces (ENIs) and IP addresses in your VPC’s subnets. The service is now in your VPC, enabling connectivity to AWS services via private IP addresses. That means that VPC Security Groups can be used to manage access to the endpoints and that PrivateLink endpoints can also be accessed from your premises via AWS Direct Connect.

https://aws.amazon.com/blogs/aws/new-aws-privatelink-endpoints-kinesis-ec2-systems-manager-and-elb-apis-in-your-vpc/

So we have services exposed as an Elastic Network Interface and subject to all typical IaaS controls: subnets, NACL and security groups. A service managed by AWS now appears alongside services you are running yourself.

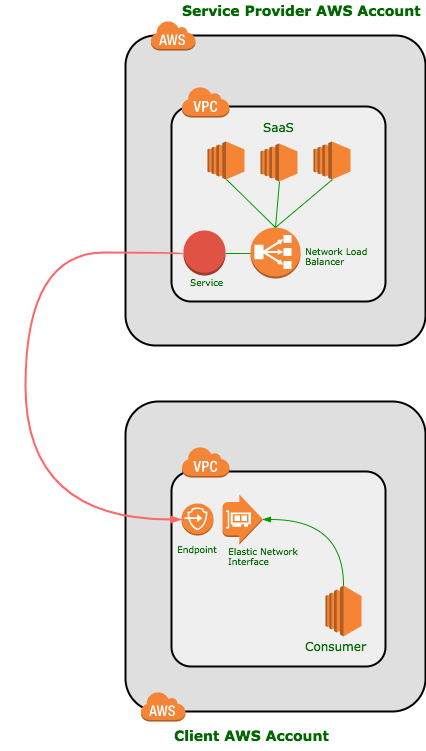

Finally, at ReInvent:2017 Amazon updated Privatelink to allow user services to be delivered via this mechanism.

Companies can now create services and offer them for sale to other AWS customers, for access via a private connection. They create a service that accepts TCP traffic, host it behind a Network Load Balancer, and then make the service available, either directly or in AWS Marketplace. They will be notified of new subscription requests and can choose to accept or reject each one. I expect that this feature will be used to create a strong, vibrant ecosystem of service providers in 2018.

https://aws.amazon.com/blogs/aws/aws-privatelink-update-vpc-endpoints-for-your-own-applications-services/

The PrivateLink release at Re:Invent provides a route for service providers to make services available on the AWS marketplace and sell them to customers. A service managed by a third party now appears alongside services you are running yourself.

Whilst carefully avoiding any lengthy debate about the specific taxonomies of Serverless, we’ll characterise it here as a continuum between manage-it-yourself and someone-else-manages-it.

Many services both open source and off-the-shelf are difficult to operate. Particularly when we consider distributed systems and databases, it can be a struggle to resource the day-to-day management of these complex systems. As a result we see a market squeeze on DevOps, Infrastructure Automation and other skill-sets.

For this reason, it seems likely that serverless options – where a varying degree of responsibility for operations is outsourced to AWS – become increasingly attractive. We are seeing more and more managed components on AWS (and other clouds) as they evolve their proposition from pure IaaS to PaaS.

Of the criticisms levelled at Serverless, many revolve around it being “..one of the worst forms of proprietary lock-in that we’ve ever seen in the history of humanity…”

Agreed, certain services such as Lambda are hopelessly tied into AWS. However, other services such as persistence stores, queues and other architectural components are reasonably independent and potentially fungible.

So we see the provision of somewhat serverless but standardised and transferable services. This includes Elastic Container Service for Kubernetes (EKS) and Fargate in the container space, AmazonMQ (ActiveMQ) and ElasticSearch service.

The PrivateLink release at Re:Invent takes this a step further and provides a route for service providers to make services available to customers and list them on the AWS marketplace. In this way partners and vendors can create services which are consumable in an identical fashion to AWS services.

We’ve seen this before somewhere… Ah yes…

When amazon opened up their marketplace to third party sellers there were some questions asked. The bottom line is that Amazon generates revenue in both cases and it allows them to create a proposition which is broader than that which they can deliver themselves – in the shopping case to extend the long tail to items they would never consider stocking or are no longer directly obtainable.

And so it is with PrivateLink. AWS are opening up their PaaS to third parties and can expect the services provided on the platform to exponentially increase. Yes, we have always been able to consume SaaS services from Amazon, but this represents a significant reduction in friction from procurement and compliance now that the services are directly consumable and billable from within the AWS environment.

So to review, if we were looking to deliver a third party into AWS as a commercial offering, the following options have been available:

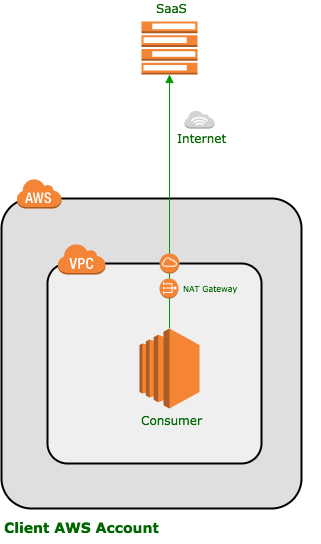

Software as a service (SaaS) is delivered over the public internet.

Software as a service (SaaS) is delivered over the public internet.

This frees the provider to choose the platform of their choice – and is still a good option where the platform is hosted in an non-AWS environment.

However, the data traverses the public internet which may be a no-no for compliance.

Similarly, the client infrastructure must have internet access which can be a broad security and compliance issue where this is a factor.

There is also latency and bandwidth considerations as data must pass from one datacenter to another. Even where both systems reside on AWS, traffic is pushed out onto the internet (or at least the AWS public network) and must travel much further than private traffic.

Traffic leaving AWS also incurs a cost overhead.

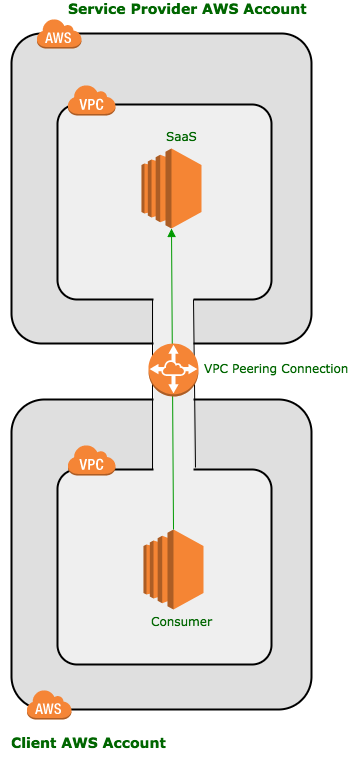

In the VPC Peering scenario, we connect the Virtual Private Network of the client to a Virtual Private Network of the service provider. This keeps all traffic private.

In the VPC Peering scenario, we connect the Virtual Private Network of the client to a Virtual Private Network of the service provider. This keeps all traffic private.

This is a common pattern for delivery at present – e.g. MongoDB Atlas

The VPC peer provides two-way access between the VPCs which opens the door to the service performing actions in the client account when not properly secured.

It also requires that the two VPCs have non-overlapping address spaces – not only in the AWS space but in a direct-connect architecture with on-premise systems too – such that successful routing to the VPC can take place.

Creating such a significant connection between client and service supplier is less than desirable generally and quite difficult in complex compliance scenarios.

As discussed, PrivateLink allows services operating behind a NetworkLoadBalancer to be registered as service in AWS. These services can then be shared with specific users and roles in the same or other accounts.

As discussed, PrivateLink allows services operating behind a NetworkLoadBalancer to be registered as service in AWS. These services can then be shared with specific users and roles in the same or other accounts.

The services are consumed as an endpoint in the consumer account and exposed as an Elastic Network Interface (in the same way as EC2 is).

The ENI will be located in a subnet within the VPC and have a security group – allowing usual EC2 controls to be put in place.

These provides a clean separation of concerns and private traffic between the systems. Vendor staff can access their account to perform upgrades, patches and fixes within impacting on the client account in anyway.

Many custodian organisations of open source projects have struggled to commercialise their businesses. Freemium and consulting services can be challenging to execute and create stability around.

The challenge presented by PrivateLink is for OpenSource custodians to deliver the convenience that Serverless provides without the lock-in. If open source tools can be delivered without the headache then they become a real alternative to more opaque services delivered on the cloud platforms.

You know it, you operate it: in many cases open source systems can be tricky to operate and the promise of them being delivered as a service – at least in some environments can be a strong incentive to go for the opensource option.

There are, of course, challenges – at the moment PrivateLink services are delivered over a Network Load Balancer, which is not optimal for all Open Source projects (for example Cassandra). We hope that these technical challenges can be overcome or worked around.

OpenCredo was conceived in open source and believe this is a strong opportunity for open source software to move front-and-centre in the cloud PaaS space on AWS. From more commercial tools such as Hashicorp through to CNCF projects there are candidate services which could become standards within the cloud space as well as elsewhere.

Get your -aas in gear.

This blog is written exclusively by the OpenCredo team. We do not accept external contributions.

Cloud for Business in 2023: Raconteur and techUK webinar (Recording)

Check out the recording of our CEO/CTO Nicki Watt, and other panellists at the Raconteur and techUK webinar discussing “The Cloud for Business Report,” which…

Lunch & Learn: Secure Pipelines Enforcing policies using OPA

Watch our Lunch & Learn by Hieu Doan and Alberto Faedda as they share how engineers and security teams can secure their software development processes…